AIRun: Run AI Prompts Like Programs

This is a working AI program:

#!/usr/bin/env -S ai --opus --live

Summarize these git commits into release notes.Save it as release-notes.md, make it executable (chmod +x release-notes.md), and run it:

git log --oneline -20 | ./release-notes.md > CHANGELOG.mdTwenty commits go in, formatted release notes come out. The script reads from stdin, processes through Claude Opus, and writes clean markdown to stdout — the same input/output contract that grep, sort, and awk have followed for decades.

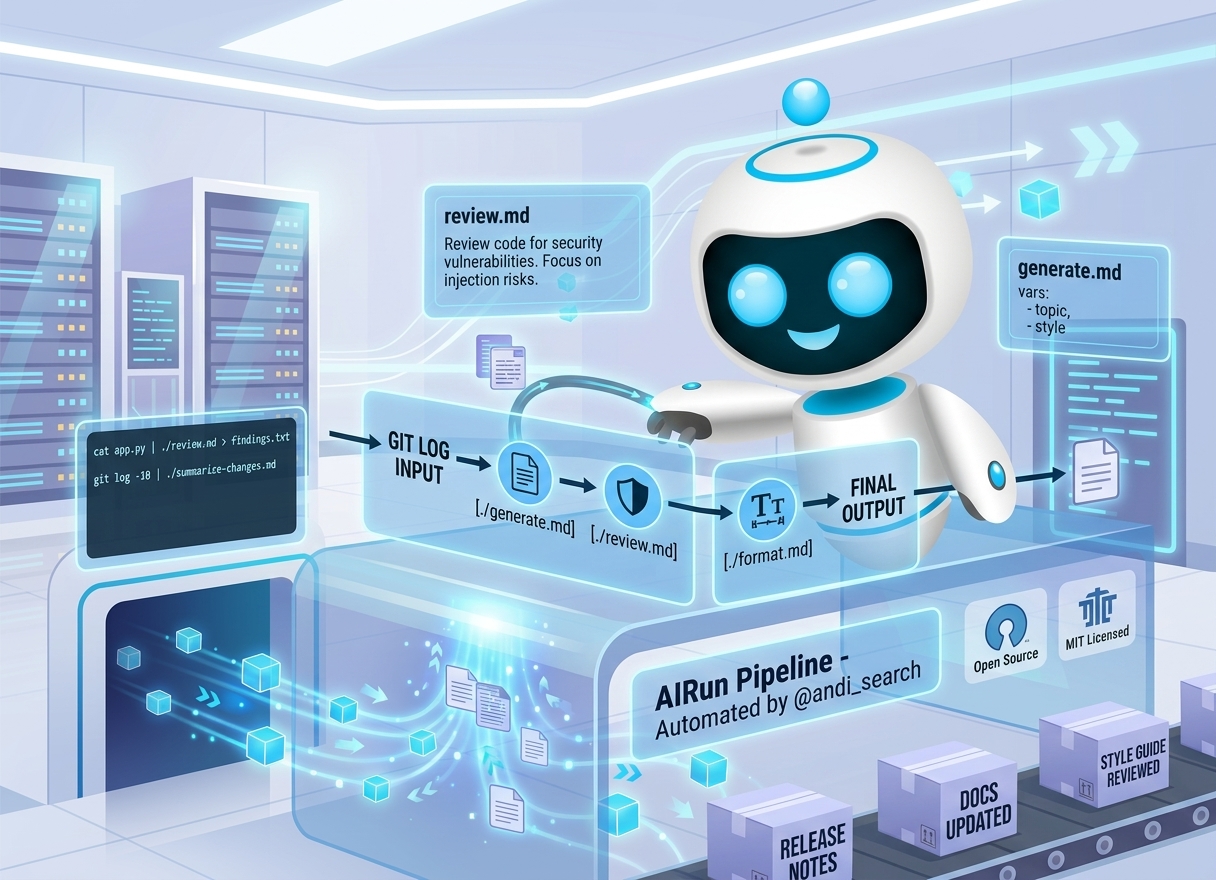

AIRun turns plain-text prompts into executable programs. You write in English, run from the terminal, and compose scripts with pipes and redirects. The tool handles provider configuration, model selection, and output streaming. Your prompts become version-controlled scripts that anyone on your team can run, against Claude Code, OpenAI Codex, any local model on their own machine, or any cloud and model, without changing the script itself.

We found it so useful for our own work at Andi that we open-sourced it.

Writing executable scripts

The shebang line makes a markdown file executable. #!/usr/bin/env -S ai routes the file through AIRun, and flags after it set the model and behavior:

#!/usr/bin/env -S ai --opus --live--opus selects Claude Opus. --live streams output in real time with a heartbeat indicator during tool calls, so you know work is happening even when the model is thinking. For CI/CD pipelines, --quiet suppresses status messages and gives you clean output only.

Everything below the shebang is your prompt: plain text, markdown formatting, structured instructions. AIRun passes it to the model as-is. A script can be as minimal as the two-line example above or as detailed as you need.

Permission flags control what the AI can do during execution:

#!/usr/bin/env -S ai --skip # skip permission checks

#!/usr/bin/env -S ai --allowedTools 'Bash(npm test)' 'Read' # allow specific tools onlyFlag precedence works the way you’d expect: command-line flags override shebang flags, which override saved defaults. Running ai --haiku release-notes.md on a script with --opus in its shebang uses Haiku.

Parameterizing prompts with variables

Static prompts handle single tasks. For scripts you run repeatedly with different inputs, declare variables in YAML front-matter:

#!/usr/bin/env -S ai --haiku

---

vars:

topic: "machine learning"

style: casual

---

Write a summary of {{topic}} in a {{style}} tone.The vars: block sets defaults. Double-brace placeholders get replaced at runtime. Override any variable from the command line:

./summarize.md --topic "AI safety" --style formalBecause the defaults are visible in the file itself, the script is self-documenting. Anyone reading it can see what parameters it expects. And because scripts are just text files, they work with git, code review, and any other part of your existing development workflow.

Composing scripts with Unix pipes

AIRun scripts read from stdin and write to stdout, so they compose with each other and with standard Unix tools:

cat data.json | ./analyze.md > results.txt

git log -10 | ./summarize-changes.md

./generate-report.md | ./format-output.md > final.txtEach script is a filter. Data flows in, processed output flows out. Chain scripts together, redirect to files, or feed results into tools like jq or pandoc. When --live is active, progress updates go to stderr, keeping the stdout pipe clean for anything downstream.

Have an existing document you want feedback on? Pipe it through a review script: cat draft.md | ./review.md. Need to reformat output before sending it somewhere else? Add a formatting script to the chain. The pipeline model means each script stays small and single-purpose, and you can recombine them for different workflows without rewriting anything.

Running scripts on any model

Every example above uses Claude, but the same scripts run on other models without changes. AIRun supports AWS Bedrock, Google Vertex, Azure, Anthropic API, and Vercel AI Gateway through provider flags, plus local inference through Ollama and LM Studio. It also supports OpenAI’s Codex as an interpreter and harness:

ai --aws --opus # AWS Bedrock

ai --ollama --model minimax-m2.5:cloud # Ollama

ai --vercel --model openai/gpt-5.2-codex # OpenAI via Vercel

ai --codex # Codex as interpreterThrough Vercel AI Gateway, you can reach 100+ models across OpenAI, xAI, Google, Meta, Mistral, and DeepSeek. For local inference, Ollama runs open-source models like GLM-5 and DeepSeek without API costs. Everything stays on your machine. LM Studio handles MLX-optimized models on Apple Silicon.

Your scripts don’t need to know which backend runs them. The same release-notes.md from the opening example works whether you call ai --aws release-notes.md or ai --ollama release-notes.md. Model selection happens at the invocation layer, not inside the prompt.

If you hit a rate limit on one provider mid-task, --resume continues the conversation on another:

ai --aws --resumeFor providers you use regularly, --set-default saves your preference:

ai --aws --opus --set-defaultAfter that, running ai without flags uses AWS Bedrock with Opus automatically.

Getting started

git clone https://github.com/andisearch/airun.git && cd airun && ./setup.shThis installs the ai command to /usr/local/bin. If you already use Claude Code, AIRun works with your existing subscription right away. For other providers, add API credentials to ~/.ai-runner/secrets.sh — they’re loaded automatically.

Write a test script and run it:

#!/usr/bin/env -S ai --haiku --live

Explain what a Dockerfile does, in two sentences.chmod +x explain.md && ./explain.mdFrom here, try piping data through (git log | ./explain.md), adding variables, or switching providers with ai --ollama explain.md. The repo’s scripting guide (docs/SCRIPTING.md) covers pipelines, permission modes, and provider setup in more depth.

AIRun is MIT-licensed and open source at github.com/andisearch/airun. Run ai update to pull the latest version.